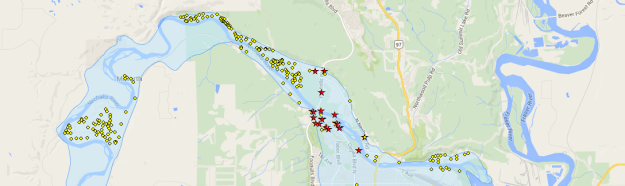

Sometime in the last year, the County of Grande Prairie (Alberta) starting providing bare earth and full feature LiDAR through their Open Data Catalogue (LiDAR download map here). Here’s how you can use the data to visualize 3D buildings, using a combination of ArcGIS for Desktop (with Spatial Analyst and LAStools), QGIS, and Cesium JS.

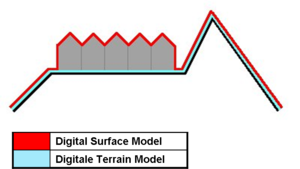

- Find and download the bare earth (digital terrain model = DTM) and full feature (digital surface model = DSM) LiDAR zip files for a quarter section of your choice from the LiDAR viewer (I used the files here and here). To familiarize yourself with the concepts of DSMs and DTMs, read this answer.

- Download LAStools and add the LAStools toolbox to ArcMap (or ArcCatalog)

- Run the las2dem tool within the LAStools toolbox for each of your downloaded .las files. You can accept all default parameters.

- The tool should output a .tif image for each surface, projected to UTM Zone 11. If not, run Define Projection to assign the projection.

- The names of each layer will be the same, by default (e.g. “710622NW.tif”). Change them to be unique, so geoprocessing tools (and you) can easily tell them apart (e.g. “710622NW_BE.tif” and “710622NW_FF.tif”).

- The DTM and DSM each report elevations in meters above sealevel. Since we are interested in the difference between those elevations (i.e. the difference between rooftop and the ground pixels) we need to subtract the two images. You can do this using the Minus tool, or more generally, Raster Calculator. I used the expression: Int(“710622NW_FF.tif”-“710622NW_BE.tif”) to return the difference between the DSM and DTM, as integer numbers (this will simplify things later on).

- Eventually, we are going to want polygons to load into Cesium (on the order of hundreds or perhaps a few thousand), not individual pixels (there are 700,448 pixels in my image). At this point, we have what is called a classified image, classified by height in meter increments. We want to group this classified image by similar height values, and we can do that by processing our classified output. Following the instructions in this link, we are guided through filtering, smoothing, and generalizing our output.

- Run Raster to Polygon on your generalized output to produce polygons.

- Cesium is going to want lat/long coordinates, so you may as well run the Project tool on your polygons to switch the data to WGS84.

- As far as I know, ArcGIS does not support GeoJSON (which we are going to use in Cesium). Conveniently, QGIS does. So, load your polygons in QGIS and “Save As…” GeoJSON.

- Upload your GeoJSON to your host.

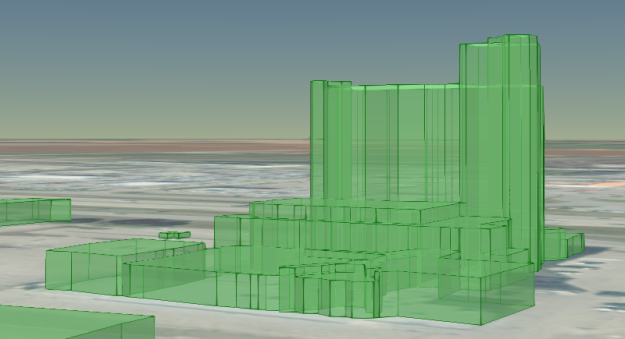

- Switching to Cesium, create a map and load your GeoJSON (general example here). Cycling through the GeoJSON polygons, assign the polygon height to 0, and the extruded height to match the elevation attribute in your data (for me, that was stored in the “GRIDCODE” field).

- That’s it! You can see my interactive example here, using a “modern” browser. Here’s a sample, showing the Paradise Inn (pictures from my example, and Tom Shield Realty):

Note: I tried and failed to add a link to the LiDAR Open Data License in my example. Hopefully, this will be sufficient.